the last invention

A history of the singularity, piece by piece, as explored by the thinkers who got us to where we are.

The von Neumann Singularity

From von Neumann's obituary: the two had discussed "the ever accelerating progress of technology... which gives the appearance of approaching some essential singularity in the history of the race beyond which human affairs, as we know them, could not continue." Origin of the term I think? (Correct me if I"m wrong)

Profiles of the Future / BBC Horizon

Clarke predicts machines will "start to think, and eventually completely out-think their makers."

Arthur C. Clarke - BBC Horizon (1964). Watch video

"Speculations Concerning the First Ultraintelligent Machine"

Good worked with Turing at Bletchley Park. This paper gives the intelligence explosion its first rigorous formulation: if a machine can improve its own design, you get recursive self-improvement. This is basically the next "threshold" we are approaching so worth reading at this current moment in time. RSI is all the hype.

Mind Children

Moravec argues robots will reach human-level intelligence by the 2040s. Good place to start, if you're not going to start with Kurzweil.

"The Coming Technological Singularity"

The paper that named it. Presented at a NASA symposium. Vinge's core argument: we can't model what comes after superintelligence, any more than a goldfish can model economics. (Vinge is also one of my favorite sci-fi writers, his stuff is great.)

Vernor Vinge - Groupminds, Singularity University (2012). Watch video

Robot: Mere Machine to Transcendent Mind

Moravec doubles down with specific forecasts. Haven't read this one yet.

The Age of Spiritual Machines

Kurzweil draws exponential curves through the history of computation and extends them forward. Machines match human intelligence by 2029 (Seemed ridiculous when I first read it and now it seems insanely on point?). The law of accelerating returns. To be clear though, while his timelines are seeming super prescient it doesn't really feel like we've taken the Kurzweil path to get here.

The Singularity Is Near

The one that brought it mainstream. By 2045, human and machine intelligence merge. Exhaustively researched, relentlessly optimistic. You'll either find it prophetic or maddening, possibly both. This is where I first met the term, after grabbing this book from my parents' bookshelf as a teenager.

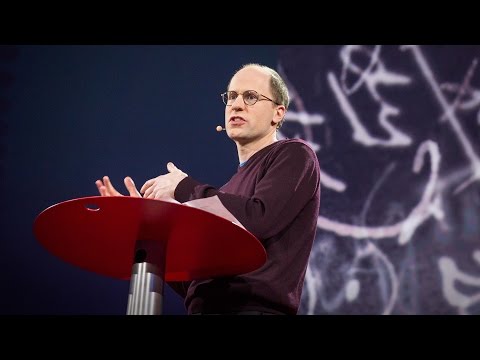

Superintelligence: Paths, Dangers, Strategies

Bostrom maps the paths to superintelligence and the ways it could go wrong. The paperclip maximizer comes from here. This might be the single most important book on the list if you're only going to read one.

Nick Bostrom - What happens when our computers get smarter than we are? (TED 2015). Watch video

Life 3.0

Accessible survey of the superintelligence landscape. Tegmark maps outcomes from utopia to extinction. A little on the pop-sci end but worth reading.

Reality+

Chalmers on virtual worlds, simulation, and what happens to "reality" when intelligence can be manufactured. Broader than the singularity specifically but relevant.